If we’ve been around for any length of time, we’ve all got that project that “went south”. The one that still bugs me at times was almost ten years ago, when a client asked for help to develop a new product that would provide customers with telemetry data on software use. (You know, the kind of thing that Microsoft does when you click the box that sends usage data on SQL Server to them.)

This data is really useful to software companies, as they are then able to understand what features of their applications are being used, and I was excited to be part of the process.

Then politics got involved.

Company management decided that Analysis Services was not trustworthy, yet one of the key features of the solution was to allow customers to effectively slice and dice the reported metrics to see how and when features were to be used. No, the principal tool for just that capability was not part of the plan, it all had to be done in Transact-SQL against a relational database.

(Have I mentioned that I firmly believe that you should always use the best tool for the task? I talk about using PowerShell frequently, but it’s just one tool. Use it as it’s appropriate.)

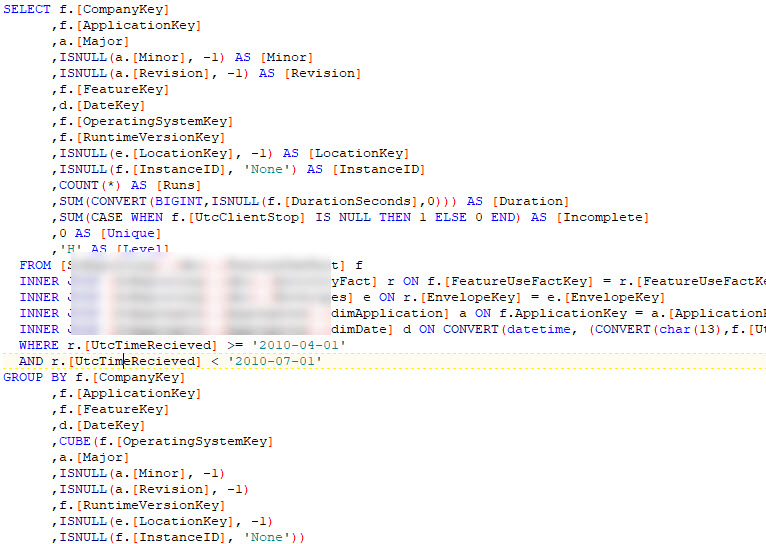

So, I had to do the aggregation via T-SQL, using CUBE and other methods to insert the pre-aggregated data at multiple levels in relational tables. I had to pre-aggregate them because the queries to do the aggregation would be too slow for customers logging in to view their usage data in real-time.

Here’s the kind of query I had to run in the processing of the raw data:

Yes, that’s a CUBE in the GROUP BY. <shudder>

So, depending on version, and date, and level, and location, etc., the process would return the aggregated metrics. After months of working out the details I got everything working, but there were two problems.

First, even with the pre-aggregation, the results weren’t coming fast enough. I did the best I could to index the data properly, but nothing seemed to make enough of a difference.

Then, the lead developer of the code that did the telemetry data extraction left the company, and the new lead told me that the data I was using as my source was all wrong, and the base foundation had to be completely re-written.

Let’s just say that the relationship went south at that point.

I still feel sad we couldn’t get this to work. I put a lot of time into the project, and in fact, thought the concept was pretty cool. When I was with SentryOne they implemented code to gather telemetry data, and it really made (and continues to make) significant difference in understanding how customers use the products.

This project could have worked, but we had two problems common to all failed projects: 1) Inappropriate constraints (not using Analysis Services in this case), and 2) Changing specifications.

It’s pretty difficult to get around the first problem, and it may just be best to walk away from the project, if the constraints are such that failure is likely.

Documentation and customer signoff on specs are really the only way to deal with the second. Make sure that any change in the specs is documented with the date and an estimate of the impact of that change on the outcome. It may seem tedious at times, but it will help you succeed in getting the project finished.

1 thought on “T-SQL Tuesday #107 – The Project That Went South”

Comments are closed.